Embedding a voice agent

There are two paths to put a voice agent inside your product:

- Embed a pre-built component. Drop a widget into the app you already ship and get a voice session behind a single call.

- Integrate the Atoms WebSocket yourself. More code to own, full control over the UI and the audio session.

The table below maps each platform to both paths.

At a glance

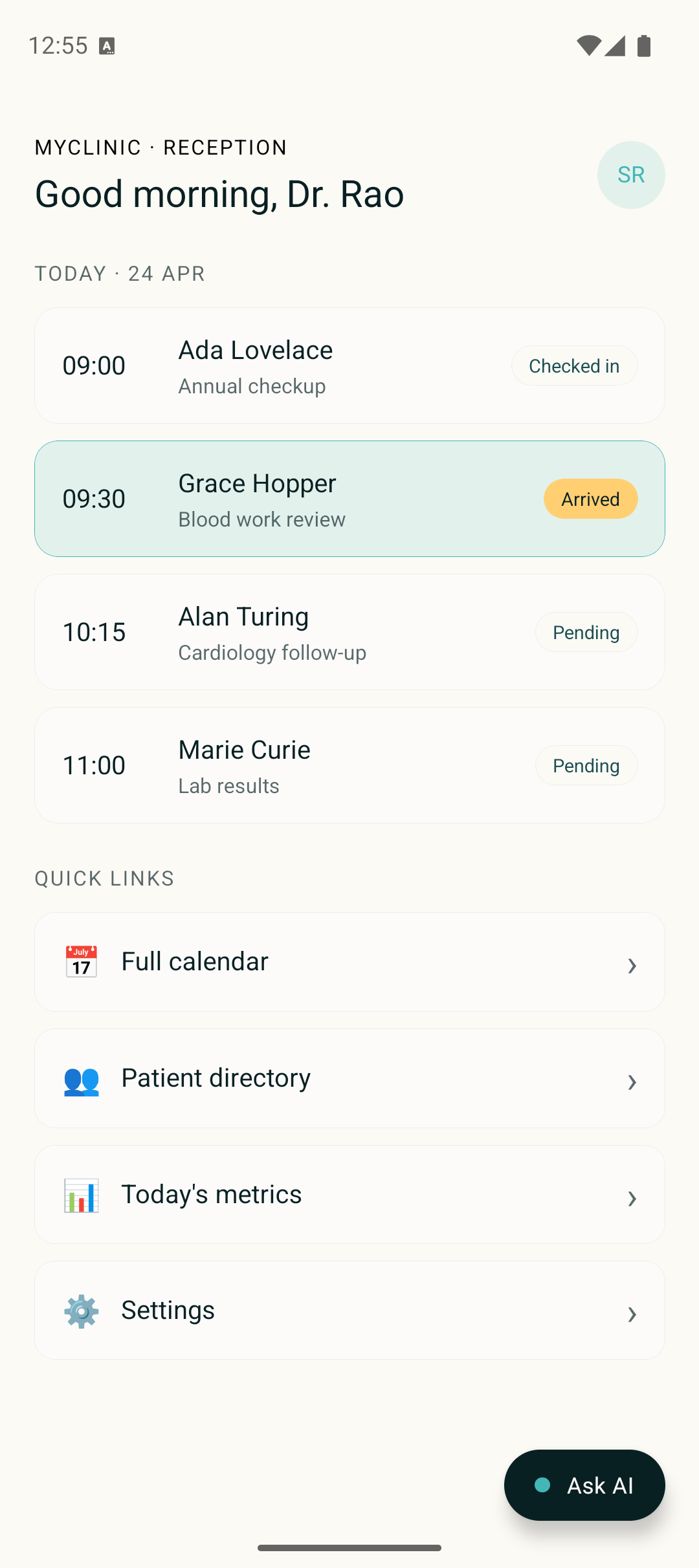

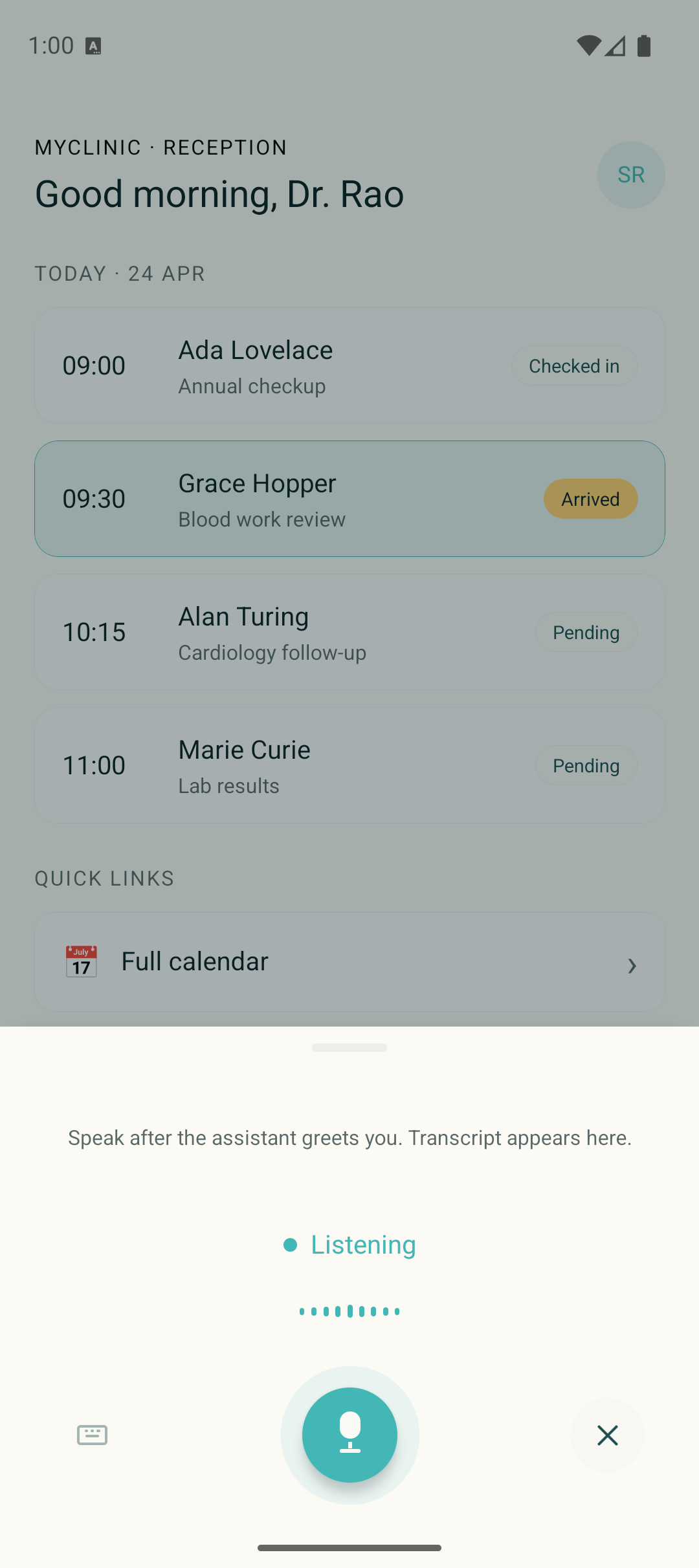

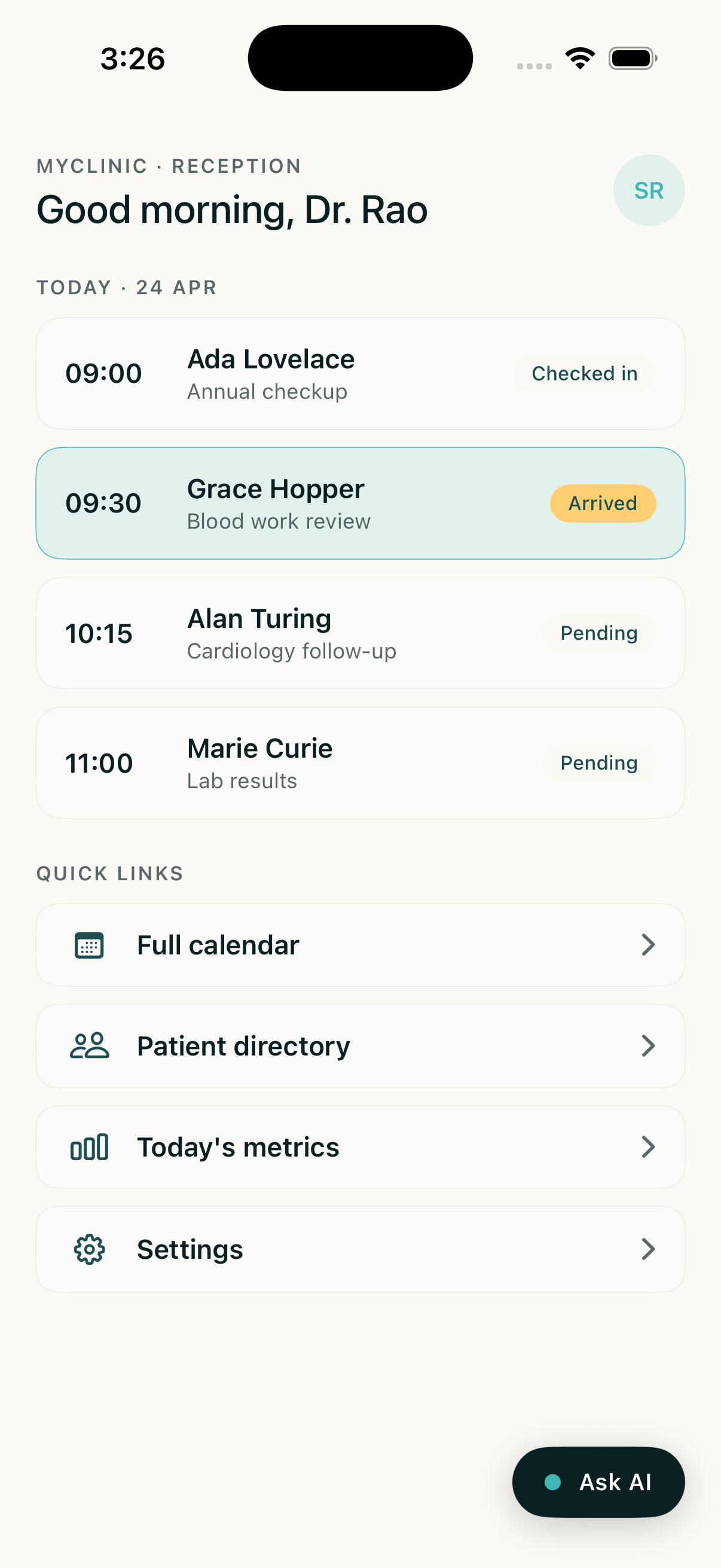

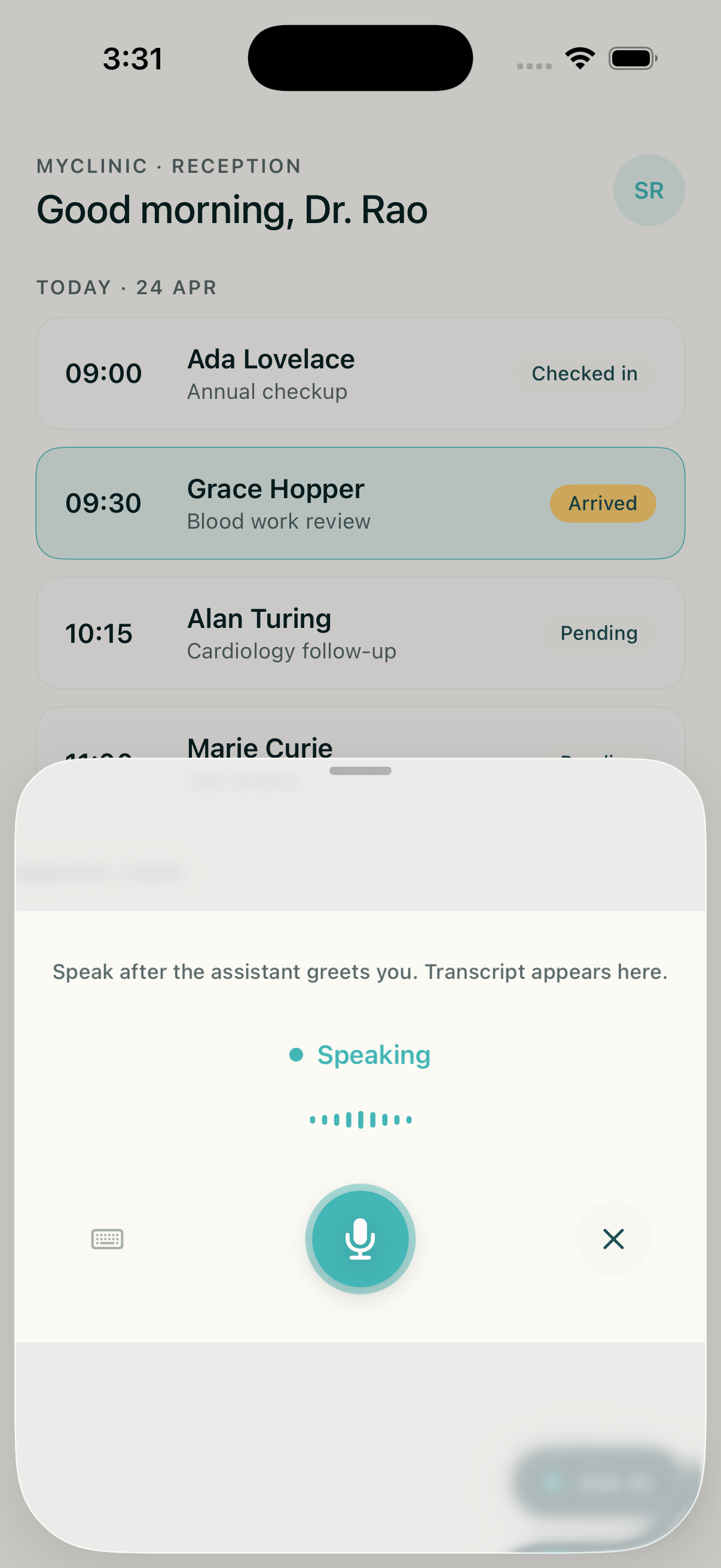

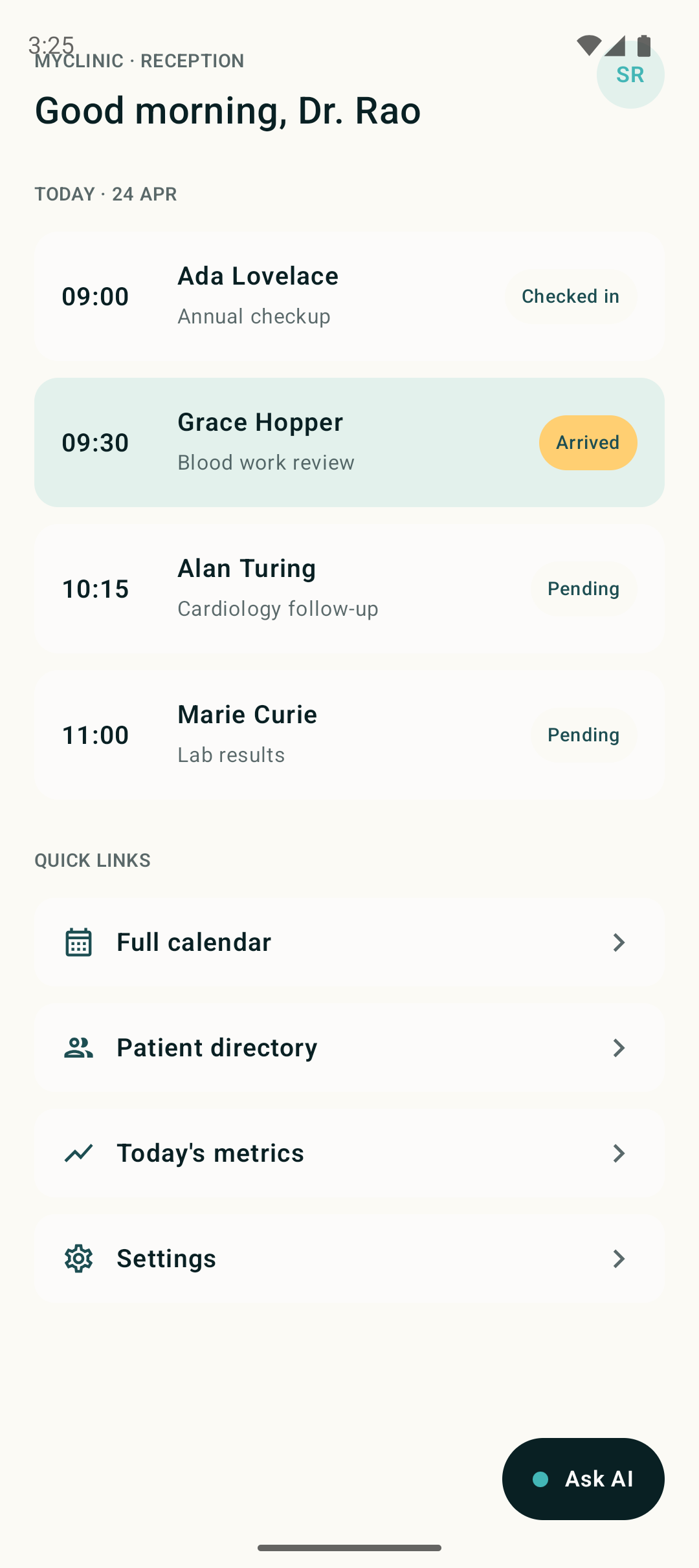

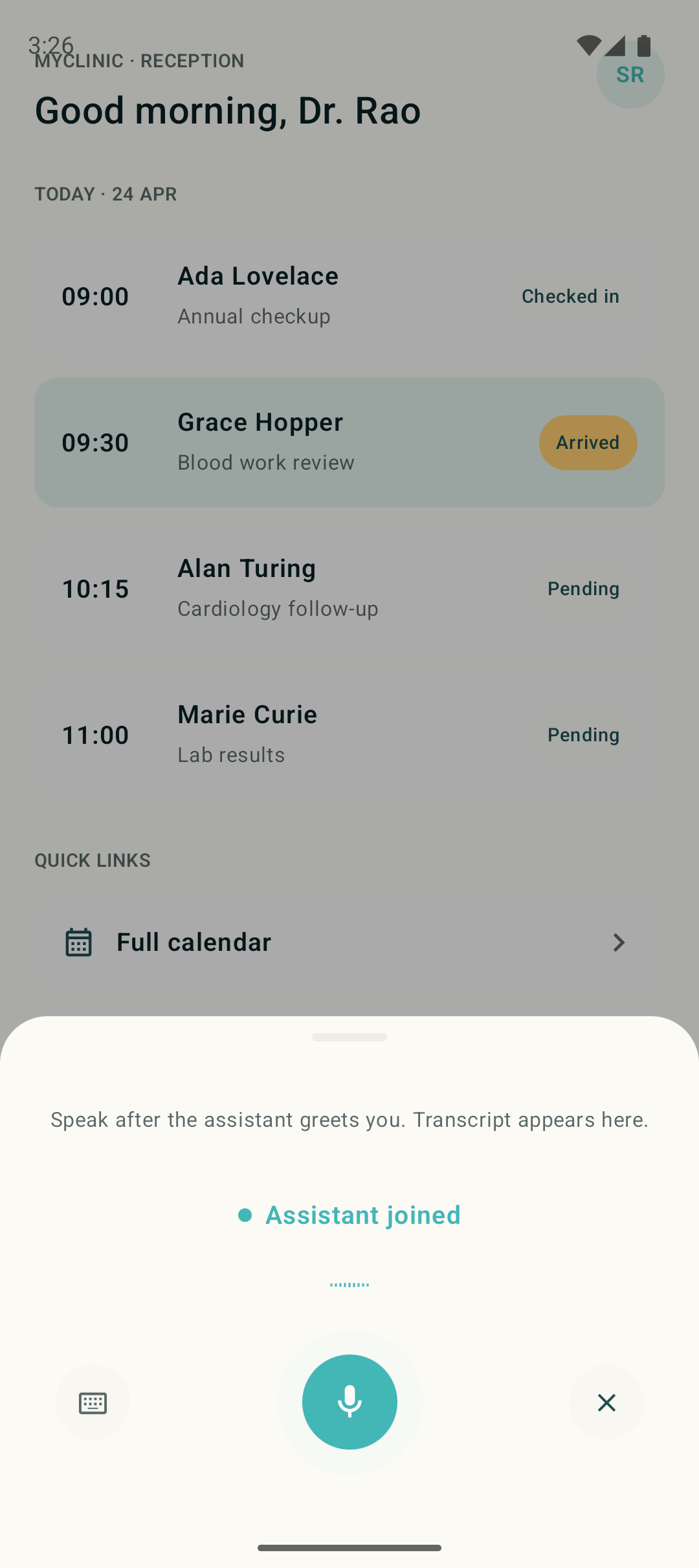

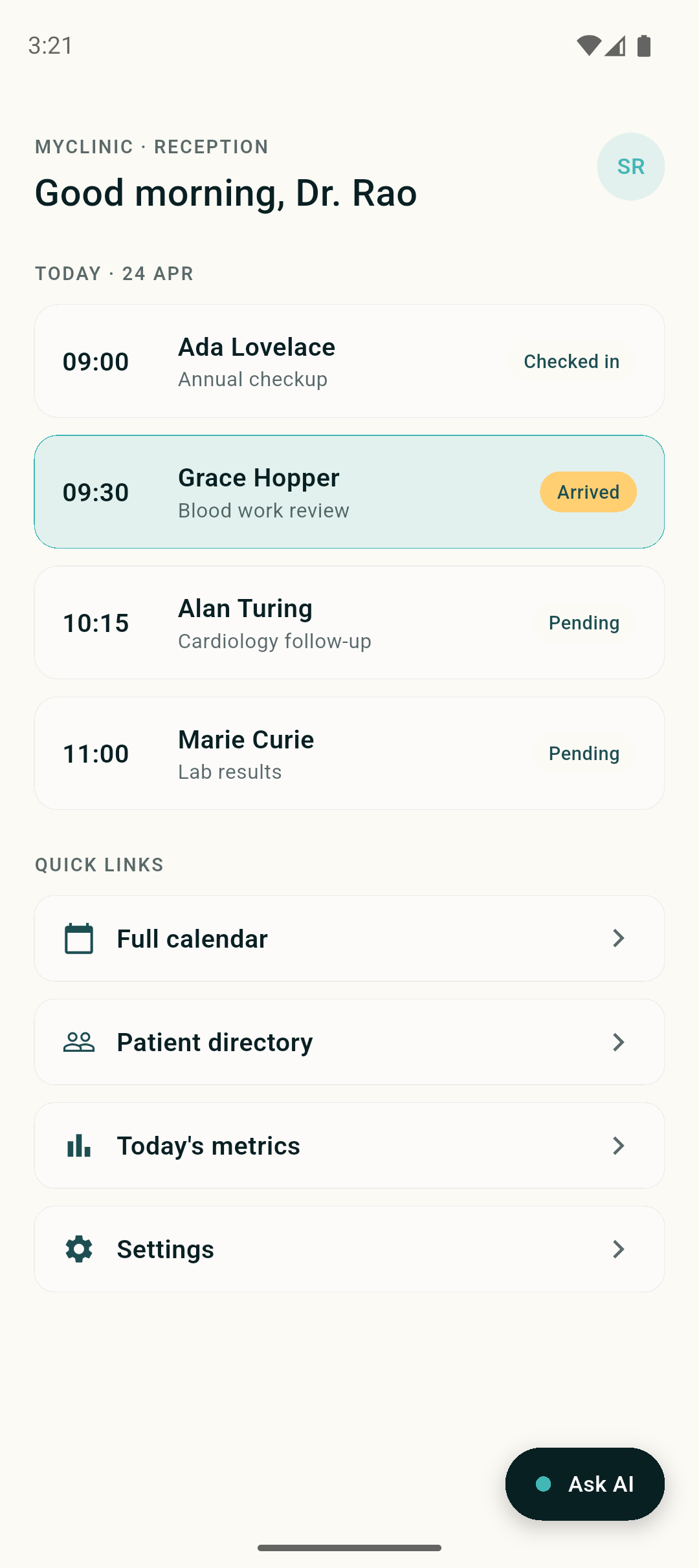

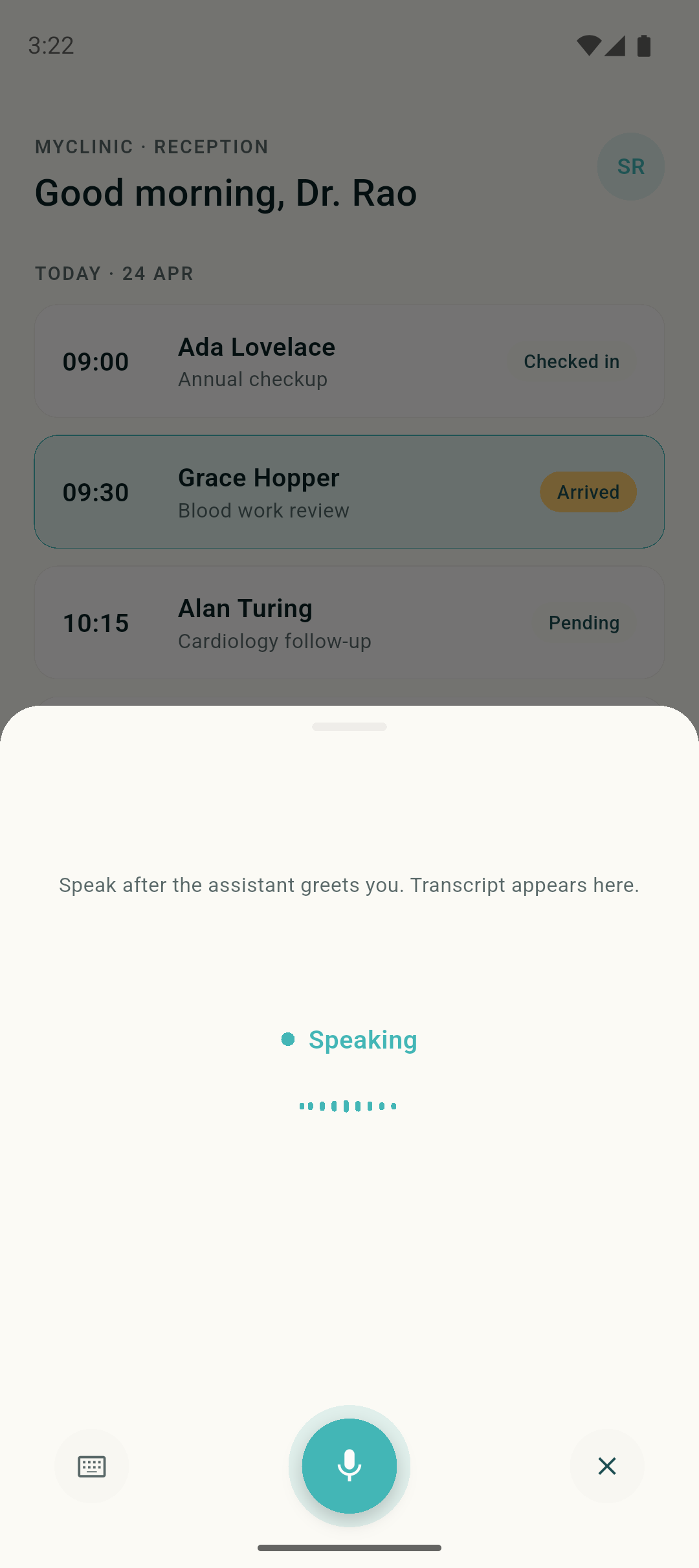

Every mobile widget follows the same pattern: a floating pill in the host app, a bottom sheet with a live voice session on tap, and a single public API that accepts an API key and an agent ID. The implementations are independent per platform, with no shared runtime, so each widget looks and behaves native.

If you need full control of the screen instead of a drop-in component, the reference apps show the same transport and audio code in a full-screen voice UI.

Web

Paste two lines into any HTML page:

That renders a floating call bubble on the page. Clicking it opens a voice session with your agent. The component is a web-standard Custom Element and works in any framework (React, Vue, Svelte, plain HTML). Configure theme, position, and agent ID through HTML attributes.

To customize the widget’s appearance or placement, see the Widget features reference.

React Native

The React Native widget cookbook ships an Expo app with the widget wired into a MyClinic receptionist host. It uses react-native-audio-api for mic capture and gapless playback, owns the WebSocket session, and exposes a mute button plus a live transcript.

For integrating the transport into an existing RN app without the widget wrapper, follow the React Native integration guide.

iOS (Swift)

The iOS Swift widget cookbook is a SwiftUI component built on URLSessionWebSocketTask and AVAudioEngine, with no external dependencies. Xcode project is generated by xcodegen from project.yml, so you can audit the full build config at a glance.

For integrating into an existing app without the widget wrapper, see the iOS Swift guide.

Android (Kotlin)

The Android Kotlin widget cookbook is a Jetpack Compose composable that uses OkHttp’s WebSocket client and platform AudioRecord / AudioTrack. Material 3 ModalBottomSheet manages the sheet. Gradle injects credentials via BuildConfig.

For integrating into an existing app without the widget wrapper, see the Android Kotlin guide.

Flutter

The Flutter widget cookbook is a Material widget on top of web_socket_channel, the record package for PCM capture, and flutter_pcm_sound for playback. Credentials are injected via --dart-define at build time.

For integrating into an existing app without the widget wrapper, see the Flutter guide.

Reference apps

If the widget pattern is too constrained (for example, you want voice to own the whole screen, or you want to study the transport plumbing in isolation), the cookbook also ships full-screen reference apps per platform:

Each is a runnable app with the same transport and audio code as the matching widget. Fork it, swap the agent ID, ship.

The MOBILE_COOKBOOKS.md cross-reference in the cookbook repo covers validation checklists, shared debug patterns (transport counter, mute-for-loop-debugging, Android emulator mic toggle, iOS simulator audio caveat), and a “when to use which cookbook” matrix.

Roadmap

First-party SDK packages on npm, Swift Package Manager, Maven, and pub.dev are the next step. The transport, audio, and UI code in the widget cookbooks today becomes the SDK tomorrow. Until then, the cookbooks are the supported embed path.

Reference

- Realtime Agent WebSocket API. The protocol every client speaks.

- WebSocket SDK (web). The browser-side JS SDK.

- Widget features. Web widget configuration options.